Frontier AI models have demonstrated genuine capability in vulnerability discovery: finding zero-days, chaining exploitation techniques, and generating working exploits with minimal human guidance. Each generation improves meaningfully on the last, and the security industry is right to take that seriously. But capability and operational utility are not the same thing. The question is not whether frontier AI can find vulnerabilities. The question is whether your organization has the system around that capability to turn a finding into a defended, remediated, and auditable outcome. That distinction, between a model and a system, is where most of the current conversation falls short.

What frontier models do well

Under the right conditions, frontier AI models are the most capable vulnerability discovery engines ever built. Given whitebox access, full source code, and a skilled researcher directing the process, they can reason about code at depth, hypothesize attack chains, and produce working exploits with a level of sophistication that compresses what previously took weeks into hours. This is a genuine advance, and it would be a mistake to dismiss it.

The conditions matter, however. Whitebox access and researcher guidance are not the operating environment of an enterprise security team running production testing across a distributed external attack surface. Independent analysis has consistently shown that even the most capable frontier models produce plausible-sounding findings against patched, correct code. Raw model output requires skilled human review to separate signal from noise, which means the efficiency gain is real, but it accrues almost entirely to teams that already have the expertise to validate what the model produces.

Where the model ends and the problem begins

A frontier model has no concept of your attack surface. It does not manage scope, credentials, or rate limiting. It does not know which assets are in bounds, which environments are sensitive, or where to concentrate effort across a complex, distributed infrastructure. It starts from scratch every time, with no memory of what your organization looked like last quarter or last week.

More consequentially, a model does not triage its own findings, produce compliance-ready reports, or connect to the remediation workflows your team actually uses. It generates output. What happens to that output, how it is validated, structured, prioritized, tracked, and closed, depends entirely on the scaffolding built around it. Without that scaffolding, model capability does not translate into reduced exposure. It translates into a workload.

What a production pentest actually requires

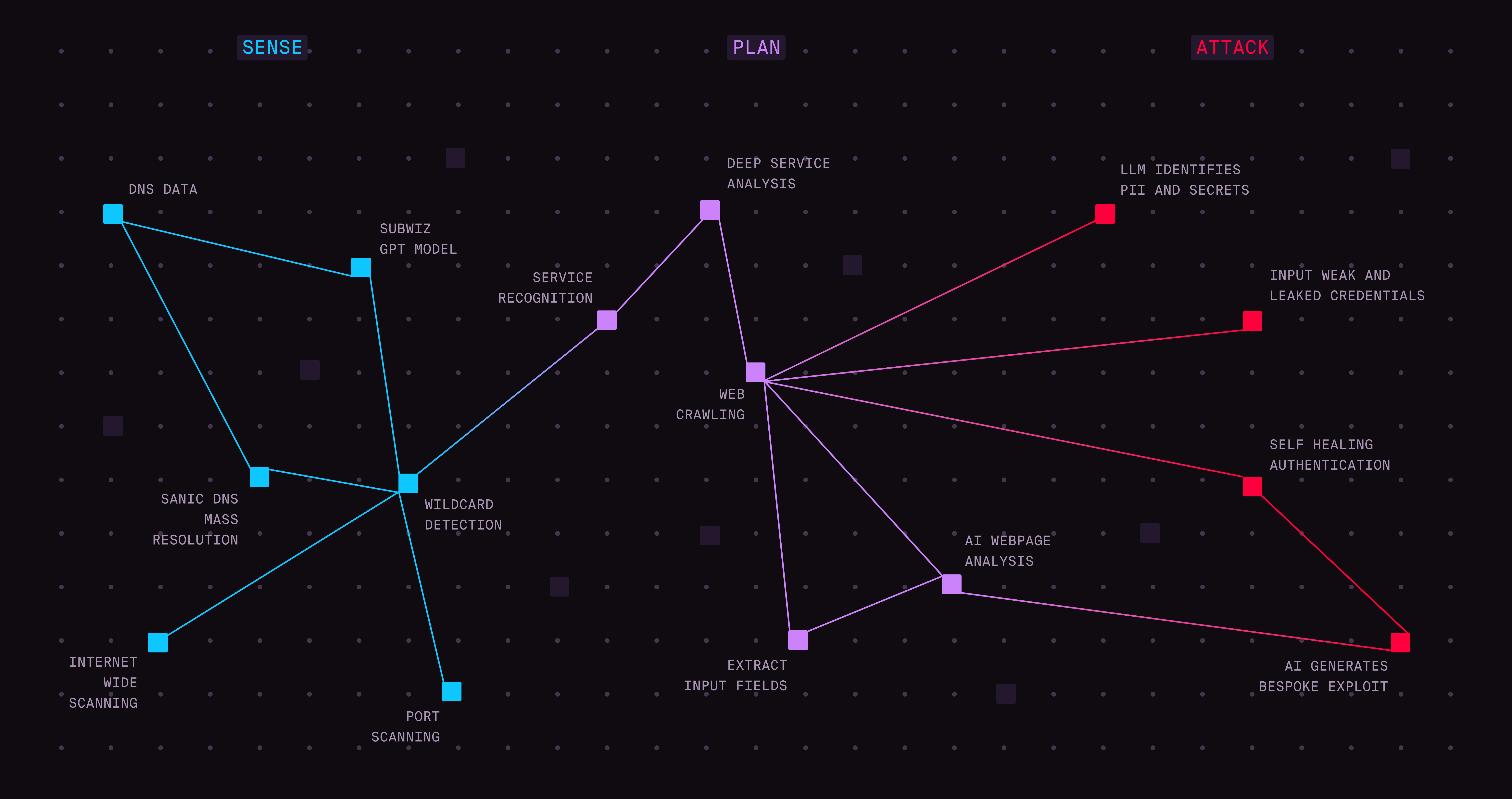

Enterprise security testing is an operational problem as much as a technical one. It requires scope management: defining the target, excluding sensitive areas, configuring rate limits, and supplying application context and credentials across multiple roles. It requires attack surface awareness, so agents enter a test informed by existing knowledge of your external exposure rather than building that picture from zero. It requires orchestration, so effort is allocated dynamically across the highest-impact vectors rather than spread uniformly across a surface too large for any single approach to cover efficiently.

It also requires human judgment at the point where it matters most: validating findings before they reach the teams responsible for acting on them. And it requires output that security, compliance, and audit functions can actually use, structured reports with executive summaries, methodology documentation, risk ratings, reproduction steps, and remediation guidance mapped to SOC 2, ISO 27001, NIS2, and DORA.

How NOVA is built differently

Hadrian NOVA is a purpose-built agentic pentesting platform that wraps AI capability in the operational infrastructure enterprise security testing demands. Every test is seeded with Hadrian's continuous external exposure intelligence, covering asset inventory, technology stack, and configuration history, so agents begin with an informed picture of your attack surface rather than an empty one. An Orchestrator coordinates a hierarchy of specialized agents, allocating effort dynamically and concentrating on the highest-impact vectors as the test develops.

Every finding is validated by Hadrian's offensive security specialists before it reaches your team. The result is zero false positives, not as an aspirational target, but as a structural property of how the platform operates. Findings flow directly into Jira, ServiceNow, and Hadrian Atlas, with retesting built into the remediation workflow at no additional cost. A full compliance-ready penetration test report, satisfying SOC 2, ISO 27001, NIS2, and DORA requirements, is produced as standard output. Security teams configure scope and launch. Results are available within hours.

Better models make better platforms

This is not a competition between frontier AI and pentesting platforms. As frontier models improve, platforms like NOVA get meaningfully smarter. NOVA's architecture is designed to incorporate advancing model capability while maintaining the orchestration, validation, and compliance infrastructure that turns raw intelligence into trustworthy, actionable results. The two are additive, not alternatives.

The question for senior security leaders is not whether to take frontier AI seriously. That case is already made. The question is whether your organization has the operational system to convert that capability into outcomes: scoped, validated, reported, tracked, and remediated. A model that finds an exposure your team cannot act on has not improved your security posture. It has added to your backlog.

To see how NOVA runs structured offensive security tests against your external attack surface and delivers validated findings within hours, explore the full breakdown.

.avif)